If your items are less than 1KB in size, then each unit of Read Capacity will give you 1 strongly consistent read/second and each unit of Write Capacity will give you 1 write/second of capacity. * If you use eventually consistent reads you’ll get twice the throughput in terms of reads per second. Units of Capacity required for reads* = Number of item reads per second x item size in 4KB blocks Units of Capacity required for writes = Number of item writes per second x item size in 1KB blocks You can calculate the number of units of read and write capacity you need by estimating the number of reads or writes you need to do per second and multiplying by the size of your items (rounded up to the nearest KB). Similarly, a unit of Read Capacity enables you to perform one strongly consistent read per second (or two eventually consistent reads per second) of items of up to 4KB in size. How do I estimate how many read and write capacity units I need for my application? A unit of Write Capacity enables you to perform one write per second for items of up to 1KB in size. An example of a heavily skewed primary key is "Product Category Name" where certain product categories are more popular than the rest. An example of a good primary key is CustomerID if the application has many customers and requests made to various customer records tend to be more or less uniform. To get the most out of Amazon DynamoDB throughput, build tables where the partition key element has a large number of distinct values, and values are requested fairly uniformly, as randomly as possible. If the workload is heavily unbalanced, meaning disproportionately focused on one or a few partitions, the operations will not achieve the overall provisioned throughput level. If a table has a very small number of heavily-accessed partition key elements, possibly even a single very heavily-used partition key element, traffic is concentrated on a small number of partitions – potentially only one partition. You should set up your data model so that your requests result in a fairly even distribution of traffic across primary keys.

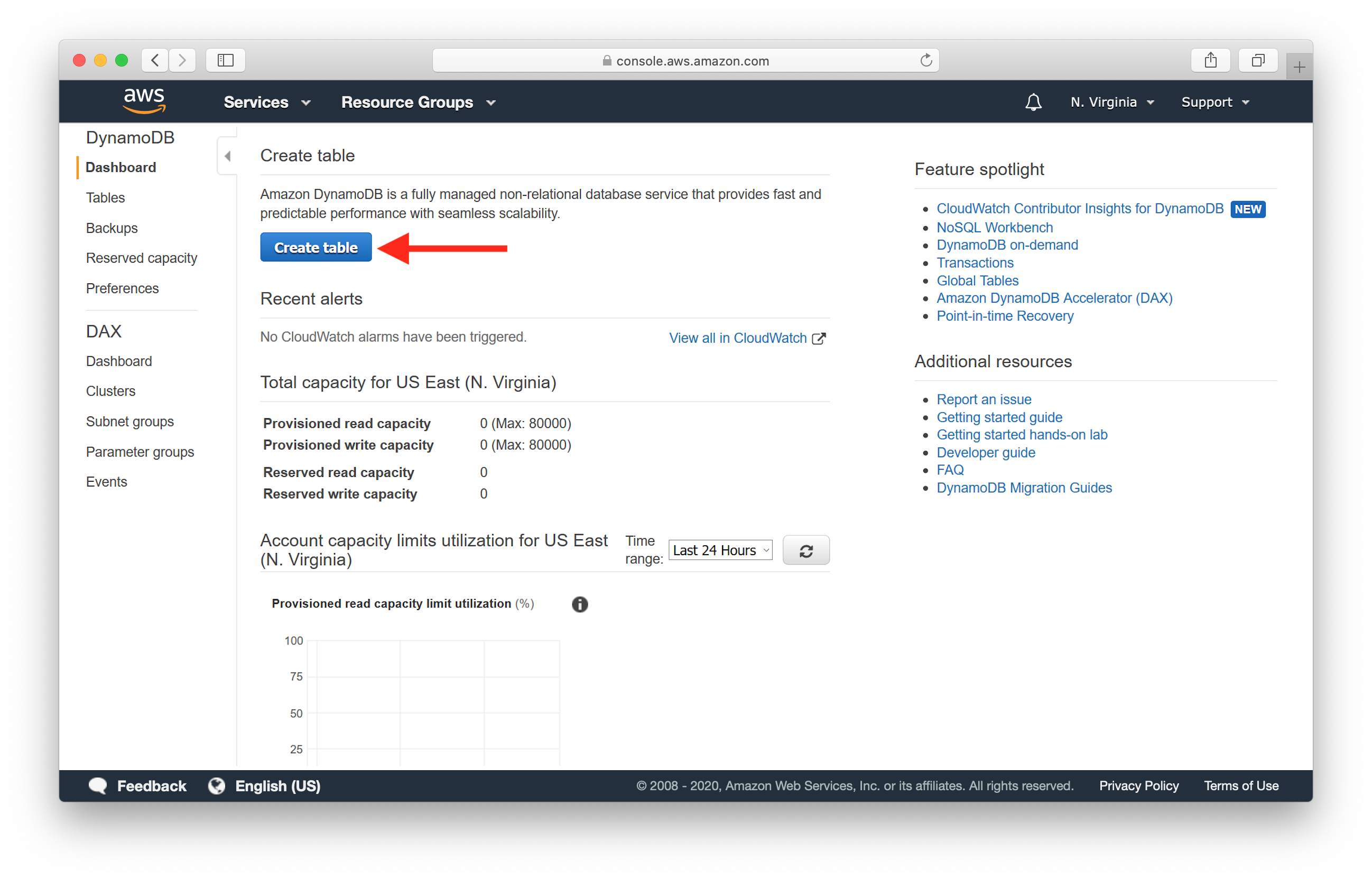

While allocating capacity resources, Amazon DynamoDB assumes a relatively random access pattern across all primary keys. When storing data, Amazon DynamoDB divides a table into multiple partitions and distributes the data based on the partition key element of the primary key. This is the provisioned throughput model of service.ĭuring creation of a new table or global secondary index, Auto Scaling is enabled by default with default settings for target utilization, minimum and maximum capacity or you can specify your required read and write capacity needs manually and Amazon DynamoDB automatically partitions and reserves the appropriate amount of resources to meet your throughput requirements. Rather than asking you to think about instances, hardware, memory, and other factors that could affect your throughput rate, we simply ask you to provision the throughput level you want to achieve. Behind the scenes, the service handles the provisioning of resources to achieve the requested throughput rate.

Amazon DynamoDB Auto Scaling adjusts throughput capacity automatically as request volumes change, based on your desired target utilization and minimum and maximum capacity limits, or lets you specify the request throughput you want your table to be able to achieve manually.